As developers we deal with regressions on a regular basis. Regressions are changes that are introduced to a system that causes a potentially unwanted change in behaviour. Engineers, being wired the way they are have a tendency to want to fix first, understand later (or understand as part of the fix). In a large number of cases however, it is considerably more effective to isolate and understand the cause of the regression before even diving into the code to fix it.

This is a continuation of a series of blog postings I am making on regression isolation and bisection, the first of which was “A Visual Primer on Regression Isolation via Bisection”. If bisection and regressions are terms that you don’t solidly understand, I strongly suggest you read the primer.

Typical Developer Workflows for Regressions

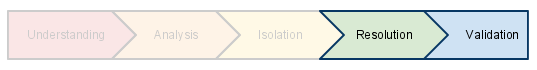

The general workflow that developers go through when dealing with a regression can be idealized in a simplified below as shown below.

These sections can be summarized as

- Understanding what the defect is, how it presents and how to reproduce

- Analysis as to which part of the system is responsible for exposing the defect.

- Isolation of the offending portion of the code.

- Resolution of the actual issue through understanding and implementing a code change.

- Validation that the issue has been resolved and that no new defects have been injected.

“It’s my Nature”, the Nature of Engineers

Engineers are inherently people who want to understand, fix and tinker with things. Software engineers are no different. Given an opportunity to immerse oneself in problems, code and architecture, that is where a software engineer will want to be. Very much like the fable of the Scorpion and the Frog. This plays out in engineers “adding their value” in Understanding, Analysis and Isolation stages.

An Aside: A, B & C Players

A popular concept that has emerged recently in the startup press where individuals are broadly classed as “A”, “B” or “C” players. Whilst I don’t agree with such simple classification, there are certain attributes which come through consistently. The “A” or at least strong “B” players are broad, but strong generalists, capable of digging deep across multiple areas and making “hard things go”.

These personalities tend to be able conceptualize broad portions of the systems and almost intuitively understand problems from the outset and have a gift in being able to isolate an issue considerably faster than their peers. In the workflow above, these A players are the ones that make the first three stages appear trivial, burning through the understanding, analysis and isolation faster than others.

In less structured environments, these people rise above others, becoming critical members of teams that drive the organization forward. Unfortunately, they are the cream of the crop, and represent a small portion of the available staff. This leaves organizations with a problem. How do you help raise the remaining “B” and “C” players to perform at the same level as “A” players in particular sub-domains.

Fortunately, in the context of regressions you can. You don’t need those precious few that can take a problem and isolate the offending sub-component in a couple of hours. Of course I’ll leave it as an exercise for the engineering manager to communicate to the A players that we are automating one of the areas that they shine in.

Code Diving

When presented with a bug, the developer will usually go through all of the stages. Depending on the “class” of developer, some may move through the stages at differing speeds. Further, some developers may implicitly be able to isolate an issue with minimal effort, just by “knowing”.

The reality, however, is that most developers will take at least some time in almost each of the steps in the workflow. Most real, non-trivial defects take a number of days to resolve fully and move through the work flow. The applied effort for most defects is front loaded in the Understanding/Analysis/Isolation stages. Once you know the cause of the regression, or at the very least the failing condition, resolving and validating a fix is typically fairly fast. Here is the diagram again, but now with more a more realistic split of efforts associated with a regression.

Automated Regression Isolation

As described in the first regression article – so long as you have an ordered set of builds and a repeatable way of determining the result of a particular test or benchmark, you can isolate regressions fairly easy and effectively. The isolation of the regression makes the early parts of the regression workflow effectively free (at least from the developers perspective). They instead focus on the Resolution and Validation stages only.

When looking at the applied effort, we begin to see the real value of isolating regressions first.

You easily save 50%, if not upwards of 80% of the effort associated with the defect if you rely on automated regression isolation. This allows the “B” and “C” players have just as much an effect on a development team as the “A” players historically would. Ideally this should also allow the “A” players to get in, add a fix and get out, moving onto build or improving the product that the team is creating, rather than regressing and resolving bugs.

Even without the benefit of automated system, judicious use of bisection can still reap benefits even if the developer or the testers need to manually bisect through the builds to isolate an issue.

Some Thoughts on the Benefits

The benefit of isolating a regression vs diving into the code is many fold. If you can isolate and feed back to the regressing developer quickly you get some of the following benefits.

- Temporal awareness of the rationale for the change.

- Regressing developer can get an increased depth for of awareness for the original change

- Developers receive an increased sensitivity to the rate of regression injection that they may have.

Diving into the code has some benefits, but also some drawbacks. These are

- Separating the injecting of a regression and the resolution into different developers with consequent differing views and understanding of the code in question. This effectively increases the likelihood that a new regression will be introduced.

- Lowering the impact to the originating developer, which begins to foster a “throw it over the fence” unless careful steps are taken.

Finally, as I was completing this article, I discovered this quote from the Mature Scrum at Systematic article in the Method & Tools online Software Engineering Magazine.

You can only deliver high quality sprint deliveries every month, if you address defects immediately they are identified as opposed to store and fix them later.

This rings true in my mind. The defects isolated while changing a system are considerably cheaper than those systemic defects introduced through poor requirements or incomplete implementations. Hence focusing on the isolation of a regression vs debugging it later will increase the effectiveness of any software team.

As per usual, feel free to contact me with feedback, or make comments below.

Good read. Will follow your series on regression stuff.

LikeLike

Appreciated the article and assertion but would have loved to see more examples of how this is implemented in practice.

LikeLike